TL;DR

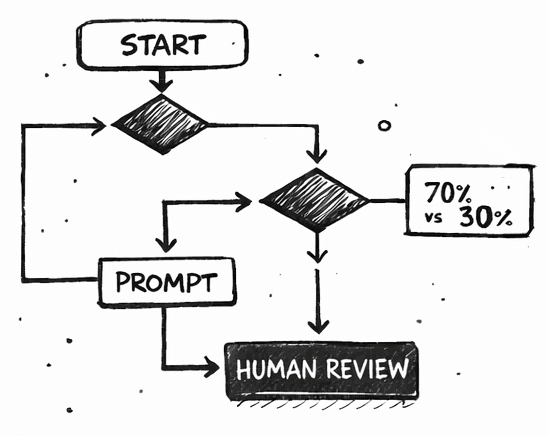

In a compliance-constrained tech education context, I architected a structured AI-assisted curriculum production system that used chained prompts, guardrails, and human approval stages to simulate agentic workflows before agent tooling was widely available. The system enabled scalable content production while preserving quality and accountability, resulting in ~70% faster course development and a 50% reduction in human effort.

Curious? Scroll down for the details…

context & user understanding

A growing education company (Future Group/WildCodeSchool) faced increasing pressure

to ship high-quality, self-paced learning content under tight time and resource constraints.

Content designers were expected to:

Translate academic material into structured curricula

Adhere to strict pedagogical frameworks and learning outcomes

Stay within hard constraints (e.g. 50-hour content caps)

Produce exercises, quizzes, and materials at “real-world” quality

This resulted in high cognitive load, repeated manual work, and a growing risk of inconsistency and burnout — especially for designers juggling multiple roles.

The challenge was not UI, but how to scale expert decision-making without sacrificing quality.

Design Intent

keep the human expert in the loop

Instead of automating decisions blindly, the goal was to design an AI-assisted system where:

Human designers remained the subject-matter experts

AI accelerated processing, structuring, and iteration

Quality, compliance, and intent were preserved through explicit guardrails

"

The system needed to behave less like a generator - more like a guided assistant embedded in a workflow.

Guardrails & Failure Handling

Predictability Over Novelty

Several risks were actively designed for to ensure that the system favoured predictability over novelty.

Hallucinations

Discovered via testing that synthetic data generation failed beyond ~50 entries

Introduced hard constraints into prompt workflows

Overconfidence (human + AI)

Mandatory human approval gates

Explicit workflow protocols to prevent blind trust

model drift

Regular control tests to ensure prompts continued to behave predictably

One conversation (chat) per goal (prompt)